Inside the Robot Mind

What viral humanoid demos reveal (and hide) about robot intelligence

In late 2025, the Unitree G1 took center stage in the world of humanoid demos. Smaller and cheaper than the previous H1, it has been upgraded continuously with increasingly dramatic demos: dance routines, punches, roundhouse kicks. By the 2026 Spring Festival Gala dozens of G1 robots were performing synchronized kung fu on national television.

Unitree G1 performs in Beijing

But here’s the thing. What we were watching was largely the least cognitive layer of the robot’s brain: the body controller.

Unitree has been fairly open about how this works (see here and here). Those kung-fu moves originate as “motion capture” data recorded directly from human expert kung-fu performances. Unitree has even open-sourced the dataset. A digital twin of the robot first learns to imitate those motion-capture movements inside Nvidia’s Isaac simulator, which is then refined through reinforcement learning until the robot can physically reproduce the moves without falling over. That’s a real achievement — keeping the two-legged, 77lb G1 balanced through such moves is extremely hard engineering and I don’t want to minimize that. But the robot wasn’t deciding to throw a spinning kick. It wasn’t perceiving an opponent. It wasn’t planning a sequence of attacks. There was no cognition and reasoning that we normally associate with a brain.

Unitree itself claims the performance was "fully autonomous" but in robotics that word can mean something different. Technically, the robots were autonomous while their body controller was replaying motion capture kung-fu moves. But only in the sense that a human was not controlling them at that moment. Not in the sense of perception and self-decision making.

Let’s compare this with a Boston Dynamics video of Atlas, their flagship humanoid:

Boston Dynamics’ Atlas training in a factory

This robot is in a Hyundai factory setting, slowly slotting engine covers into place. It’s a bit boring to watch. The robot pauses, re-estimates and moves deliberately. But this video is showing a full range of perception and decision-making by the robot. A planner is figuring out how to sequence the task. Perception is identifying parts and estimating where to place them. An action policy is generating real-time grasping and placement. And underneath it all, the body controller is keeping the humanoid stable while its arms do precision work.

The viral kung-fu videos showed us the lowest layer of the brain. The ho-hum factory worker one points to the future.

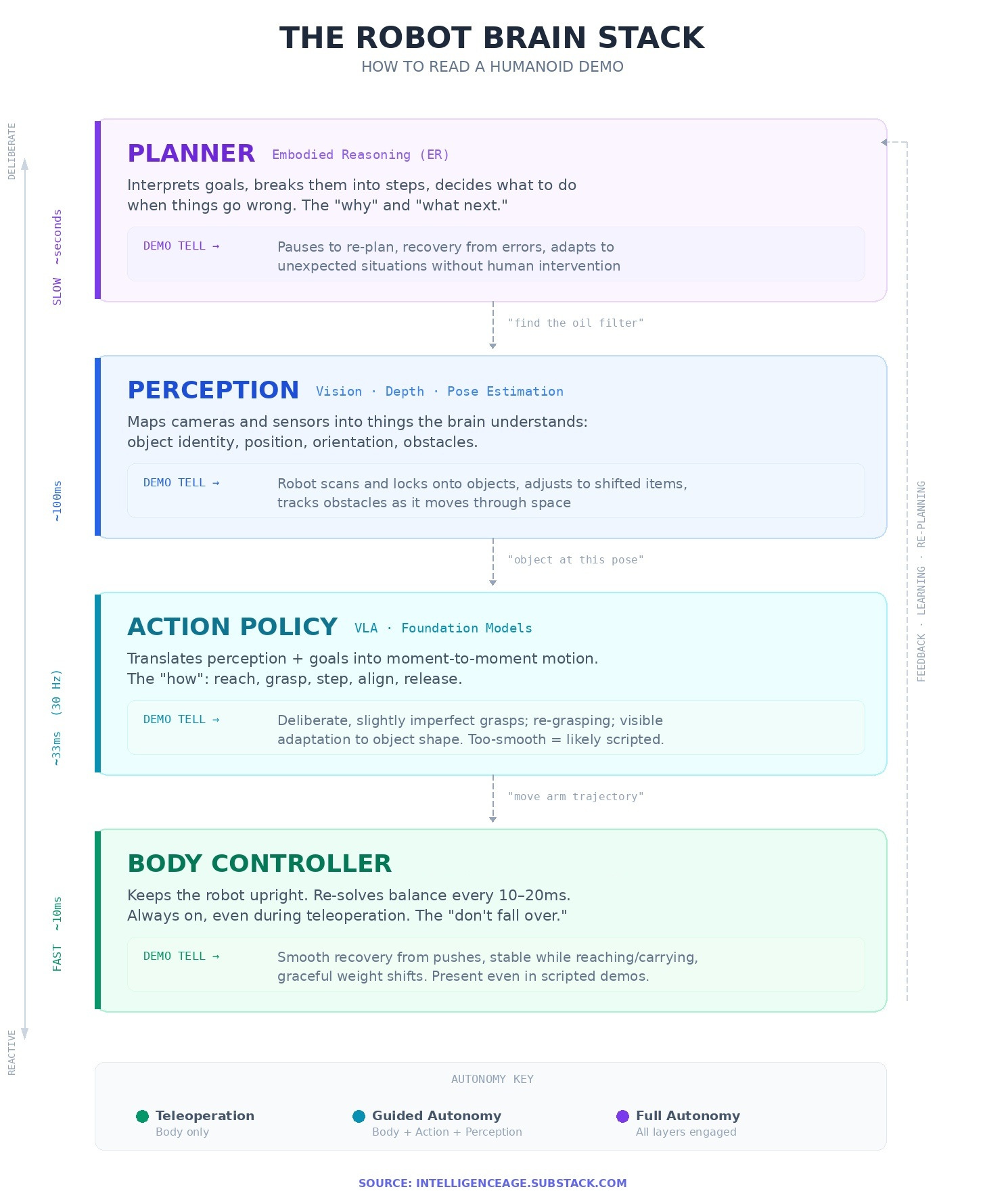

To understand the difference we need to get familiar with the robot brain stack.

How would a full robot brain stack get a job done? Let’s say you have a mechanic in a future car repair shop with a humanoid robot helper. “Bring me an oil filter from the parts shelf”, the mechanic tells the robot. The request first wakes up the robot brain’s planner layer: “make a plan!”. The planner reasons through it and decides: “I need to go to Shelf B, find the oil-filter box, pick one, return.” It then tells perception what to look for—“oil filter on Shelf B”—and perception scans the shelf, uses image recognition to find the right box, and gives back its location and orientation i.e. the pose. With that known, the action policy activates next: “reach and grasp at this pose.” The action policy starts generating the moment-to-moment motions—step closer, extend arm, align gripper, close fingers—while the body controller quietly keeps the robot balanced - and limits forces if it bumps the shelf. After the pickup, perception confirms “object in hand,” the planner switches to “walk back,” and the robot returns, oil filter in hand. Meanwhile training can record the whole thing (what the robot saw, how it moved, whether it hesitated or bumped anything) so later the system can improve.

The Atlas robot is a good template for understanding the individual brain layers because its stack is quite well known.

Aside from open documentation, another good reason to study Atlas is the partnerships. After Hyundai acquired Boston Dynamics in 2021, Atlas developed into a vast collaboration with some of the largest AI/robotics research firms like Deepmind, Nvidia and Toyota. So if one wants to learn what a state of the art humanoid looks like in early 2026, Atlas is the place to start.

The Atlas Brain

Body Controller

Humanoids are harder than self-driving cars or fixed robots. A car can stop and sit indefinitely. So can a roomba. But humanoids are constantly negotiating gravity. Even standing still is a problem to be solved.

Human minds solve this balancing problem in the cerebellum. The specific type of body control Atlas uses is “Model Predictive Control” (MPC). The MPC continually runs the physics of the robot’s posture and the objects touching it through a model. It repeatedly answers questions like: “If I apply so-and-so sequence of contact forces over the next 0.5–2 seconds, how will the robot move? Will it stay upright, slip or fall?”. The MPC then chooses the best sequence and executes only the first tiny slice (say the next 10–20 ms), re-measures, and re-solves.

The use of MPC by Boston Dynamics has a bit of a legacy aspect to it because MPC was the state-of-art body control a few years ago - Atlas has been under development for a long time. Many newer humanoid makers however use Reinforcement Learning (RL) as their body control approach. Often, the RL sits on top of a simpler lower-level controller (e.g. PD). Others, like Figure AI, are pushing toward entirely neural-net based body control.

Perception

The next layer is perception: mapping what Atlas sees and senses into things that the rest of the brain knows.

One way Atlas perceives is by comparing CAD models of the object it’s looking for with the camera image it’s seeing. The neural net driving perception then comes up with an initial “pose estimate” of the object, which is basically position + orientation. The network continually refines this pose estimate until it matches a known object with high confidence. CAD-matching is not all of it though. Learned perception — neural networks trained on real images — increasingly handle messier situations like confusing lighting, partially hidden objects, or anything that doesn't match well to its CAD drawing.

For a factory robot like Atlas, CAD matching isn’t as laborious as it sounds. Why? Because: factories are full of standard parts with CAD models supplied by manufacturers, and those can be stored in memory. Memory is cheap! This is why when you are evaluating a humanoid robot you may first want to see evidence of factory work.

Boston Dynamics also tells us how Atlas navigates itself around obstacles, knows where to plant its feet etc. It starts with a simple course map that’s like a cheat sheet: the next thing is a box, then a gap, then another platform—do a step, then a jump, then a landing. As it moves, its depth camera measures what’s actually there, and the perception system locks onto the real obstacle surfaces and keeps tracking them as they shift in Atlas’ view. The planner then uses the map to know which obstacle matters next, and uses the live tracking to know exactly where it is right now, placing each footstep relative to those tracked. This way Atlas can stay on course while adapting to small differences from its internal map in the real world.

Action Policy

Action Policy is the layer people often mean when they talk about “robot intelligence”. This is where robot perceptions and goals are translated into specific actions.

Historically Atlas’ action policy consisted of “skills” that were engineered per task. That approach can be great in some situations but it is not very scalable. New tasks mean engineers have to get down to the drawing board, build new logic and add to the list of recipes.

The new direction is the shift toward foundation models of action policy. These are deep learning based, trained from demonstrations and adaptable across tasks. Typically they belong to the “VLA” class i.e. Vision + Language instruction → Action. That just means that the VLA policy combines what the robot is perceiving with the task (“language”), to generate steps that need to be perform over the next short time period - typically 10s of milliseconds.

For Atlas, the current policy model comes from its collaboration with Toyota’s Research Institute (TRI). Toyota has built a “Large Behavior Model (LBM)” which is a VLA that maps images + body orientation + a language prompt directly to full-robot actions. The LBM updates Atlas’ actions 30 times per second for smooth motion.

Toyota’s LBM is not the only game in town. Google’s Deepmind Gemini has its Robotics 1.5 VLA model, Nvidia has the GR00T N1, and there are some open source models as well. It’s possible that Atlas’ policy choice may change going forward or we could end up with several versions running different VLA policies.

The Planner

The action policy can execute a skill sequence for a while, and that can look “high level.” But factories need a different kind of high level too.

Someone (or something) has to interpret larger goals, break them into steps, check whether a step succeeded, and decide what to do when the world doesn’t match expectations. That’s the difference between “a robot can execute a trained behavior” and “a robot can run a workflow.”

Historically, this layer meant explicit maps and hand-built optimization routines that produced footsteps, grasps, and trajectories. The newer direction is embodied reasoning (ER) based on deep learning neural nets. ER models are also foundation models, but trained on multi-modal data like images, videos etc to go beyond action policy level reasoning.

Here the recent Google DeepMind framing is useful. DeepMind distinguishes between its (action policy) VLA model and its Gemini ER, which specializes in understanding physical spaces, planning, and logical decisions. DeepMind explicitly says ER does not directly control limbs; it provides high-level insights to guide the action policy model which then in turn does the physical controls. Atlas could be heading toward using Gemini ER given its close partnership with DeepMind.

If a robot maker wants to outsource an ER layer pre-built for humanoids, there aren’t many choices. Gemini ER itself isn’t off-the-shelf. It is offered to developers via an API - which means that it runs on Deepmind’s cloud, which creates a close dependency on Google.

NVIDIA’s Cosmos Reason models are among the very few robotics ER layers that are entirely available off-the-shelf. It wouldn’t be all that surprising if Atlas is trying that out as well.

Now that we know the layers, let’s revisit what demos are actually showing us.

Matching brain stacks to demos

When looking at a demo, what one needs to understand is which layers of the brain stack are engaged. The same robot can look very capable in one video and clumsy in another, simply because different layers of the brain stack are operating.

In a fully autonomous run, the robot should be doing the whole loop: it must perceive the scene, decide what to do next, execute actions, and recover when reality doesn’t match expectations. Some “tells” of full autonomy are deliberate movement, pauses while the robot re-estimates things and occasional “uh-oh” behaviors like re-grasping or backing off and retrying. Here’s a full brain stack demo from Figure AI of their latest Figure 03 unloading a dishwasher:

Figure 03 loading/unloading a dishwasher

Notice at 3:40 how the robot in a very human way gives the dishwasher cover a light kick from below to raise it enough to close it. The overall movement is still quite deliberate, nowhere near the speed of human confidence. But the robot is clearly reasoning through the challenges of manipulating the dishwasher. Figure 03 has a more integrated brain layer system, the Helix 02, than Atlas, but conceptually it still follows the same planner-policy-control logic.

The next lower operating mode is guided autonomy (often via tablet steering): a human gives higher-level nudges—“go to this station,” “pick that,” “retry from here”—while the robot handles balance, foot placement, collision-aware motion, and sometimes even grasp selection and sequencing. Here the planning layer will probably still not be engaged (or non-existent) but the action policy typically would be. This middle mode is common in real deployments because it allows for autonomy’s scalability while keeping humans available for exceptions.

A still lower mode is teleoperation, where a human controls the short-horizon details like what to reach for, when to move, which path to take. Under teleop the robot still leans heavily on the body controller layer to keep itself balanced. The perception layer may also be used for camera views or pose tracking. But typically the robot’s planner (if it has one) is entirely over-ridden and mostly the action policy as well. In teleop the robot is basically a drone under human remote-control. There’s many entertaining teleop videos out there, e.g. the 1X Neo home robot subjected to WSJ’s skeptical eye and the famous Miami video of Optimus, strongly suspected to be teleop because of the gesture of a person taking off a VR headset as the robot um, faints.

On a similar level of brain activation as teleop would be motion capture. This is what I referred to at the beginning of the article. Here, the robot re-plays a motion sequence captured through sensor recordings of human movement. Motion capture “playback” by a robot often relies even more heavily on reinforcement learning and exquisite body control because the sequences are likely to be more advanced than what a teleop operator would typically make a robot do. On the flip side, teleop still has room for the unexpected because there’s a human operator. So a teleoperated robot may need to be a bit more robust to staying balanced under unexpected movements - compared to a motion capture routine.

Motion capture demos are heavily used for showcasing, because the heavy training on a choreographed sequence allows the manufacturer to display incredible feats - like G1’s kung-fu performances. That doesn’t make these demos fake; it just means the planner/policy/perception layers are not in the picture. This is not to say the Unitree G1 has no capability beyond awesome body control - it does have higher capabilities and we have evidence for it. Take this recent video of a Unitree G1 in a factory setting:

Unitree G1 trying its hand at factory work

In this video, the G1 is utilizing an Embodied AI model - essentially a full brain stack. But notice how the robot works in jerky, deliberate motions. The kung-fu fluidity is nowhere to be seen here. The video is also sped up 2x - which is another tell - the real workflow was even slower.

Onwards to 2028

The current state of the art in humanoid robotics is awe-inspiring body control but slow, jerky full autonomy.

Even action policies have advanced very fast, with foundation models like Toyota’s LBM and Google’s Robotics VLA improving month over month. Perception in structured environments like factories is workable today. But the planner — the layer that decides what to do next when something unexpected happens — still has a lot of catching up to do. Humanoid demos today typically have no planner at all or rely on a human to fill that gap.

However, this doesn’t mean the future is bleak. Quite the contrary. With the integration of ER models in real robots, we now know what the bridge to a fully autonomous future looks like - and we have even built some of it.

This is a clear commercial commitment. Hyundai plans to deploy Atlas on its EV assembly lines in Georgia starting 2028, beginning with parts sequencing and expanding to component assembly by 2030. A new robotics factory capable of producing 30,000 Atlas units per year is being built to support it. Not just Hyundai, Figure AI too is partnered with BMW and is building a factory to produce 100,000 robots in the next few years. There’s other reasonably concrete deployments planned or ongoing e.g. at Schaeffler which intends to use Agility’s Digit and Germany-based Neura.

China’s Xiaomi has a 5-year plan for building EVs using an advanced version of its humanoid CyberOne platform.

Whew, right? Not to mention that Tesla is targeting million-plus Optimus units by 2028 and claims production begins this summer. We can be forgiven for taking this last one with a dose of salt - Tesla said it would be using Optimus workers internally in 2025, which did not happen.

The leading robot makers are treating 2028 as the moment the planner layer has to be ready for real work.

Here’s my rough bet: by late 2027, the most-watched robot videos on the internet won’t have backflips. They will show humanoids working fluidly in unfamiliar environments, full brain stack engaged, figuring things out — pausing, re-estimating, adjusting, completing. No choreography or motion capture. Just the whole brain working seamlessly without visible effort.